The Tsinghua Gen-Z Kid Who Wants to Take On GPT | WAVES

Creating "Homo sapiens" — self-directed learning + thinking.

By Muxin Xu

Edited by Jing Liu

Months ago, an internal OpenAI image circulated online. In it, OpenAI mapped out its path to AGI across five stages:

Level 1: Chatbots — AI with conversational abilities.

Level 2: Reasoners — AI that can solve problems like humans.

Level 3: Agents — AI systems that don't just think, but can take action.

Level 4: Innovators — AI that can assist in invention and creation.

Level 5: Organizations — AI that can perform organizational work.

The roadmap looks beautiful, but most of us are still stuck at L1. The most glaring example: the lack of reasoning capabilities means large models can't even answer "which is bigger, 9.8 or 9.11." That's because the Transformer architecture can only search through massive amounts of data to highly fit an answer, rather than answering questions or reasoning like humans do. And because it can't perform multi-step reasoning, your AI agent can't generate plans with one click — many scenarios for AI application remain distant.

The Transformer, once seen as a revolutionary force in AI, is now facing its own moment of revolution. Guan Wang is among those revolutionaries. Rather than using RL to squeeze every drop of potential from LLMs, Wang chose to directly create a general-purpose RL-based large model, bypassing the theoretical limitations of LLMs — a approach that better aligns with how fast and slow thinking actually work in practice.

I waited at our agreed-upon spot for a while before this Tsinghua graduate, born in 2000, hurried over from campus. Lean and wiry, dressed in plain athletic wear, carrying a backpack — he looked like the science whiz you'd see anywhere on a university campus.

Like the genius geeks on The Big Bang Theory, talking with Wang can be especially difficult for non-technical people. He'll adopt a humble posture while spitting out professional terminology, racking his brain trying to simplify explanations without success. On some technical questions, he can't answer immediately — he needs to sit in silence for a long time, organizing what he considers precise language only after an awkward quiet spell. When discussing professional knowledge, he gets excited and talks nonstop, sometimes even forgetting to breathe, needing to suddenly tilt his head back for a long gasp when he feels suffocated.

But this is the same person who named his newly developed architecture Sapient Intelligence. This name, translated as "wise human," reveals his ambition.

Right now, though the NLP world remains dominated by Transformers, more and more new architectures are emerging and charging toward L2. There's DeepMind's theoretically proposed TransNAR hybrid architecture this year, Sakana.AI founded by Llion Jones — one of the eight authors of Transformer — Bloomberg's RWKV, and even OpenAI's release of a new model called "Strawberry," which it claims has reasoning capabilities.

Transformer's limitations are gradually being proven, with issues like hallucinations and accuracy remaining unsolved. Capital is beginning to tentatively flow into these new architectures.

Austin, co-founder of Sapient, told An Yong Waves: Sapient has currently completed a seed round of tens of millions of dollars, led by Vertex Ventures (backed by Singapore's Temasek Holdings), with joint investment from Japan's largest venture capital group, as well as top-tier VCs from Europe and the US. The funding will primarily go toward computing expenses and global talent recruitment, with Minerva Capital serving as long-term exclusive financial advisor.

With Sapient, you can see the typical path of a Chinese AI startup: Chinese founder, targeting global markets from day one, recruiting global algorithm talent, and securing support from international funds. But its atypical aspects are equally prominent: compared to more application-focused companies, this is a player trying to compete with others on technical merit.

Guan Wang (left) and Austin (right)

"WAVES" is a column by An Yong. Here, we present stories and spirits of a new generation of entrepreneurs and investors.

Can GPT Lead to AGI?

Technology iterates with brutal speed.

Not long after the large language model boom began, Turing Award winner and "AI Godfather" Yann LeCun publicly warned young students wanting to enter the AI industry: "Stop studying LLMs. You should research how to break through their limitations."

The reason: human reasoning can be divided into two systems. System 1 is fast and unconscious, suitable for simple tasks like "what should I eat today?" System 2 handles tasks requiring thought, like solving a complex math problem. LLMs cannot perform System 2 tasks, and scaling law can't solve this — it's a fundamental architectural constraint.

"Current large models are more like memorizing answers," Wang explained to An Yong Waves. "One view holds that present-day large models use System 1 to handle System 2 problems, getting stuck at System 1.5 — similar to when people dream, which produces hallucinations. Autoregressive models restrict you to outputting one token and then building only on that token." Autoregression doesn't handle memory well, can't plan answers, let alone achieve multi-step reasoning.

These limitations can also be understood through a more philosophical lens: when calculating "which is bigger, 9.9 or 9.11," does the large model truly understand what it's doing? Or is it mechanically comparing the 9 and 11 after the decimal point? If the model fundamentally doesn't know what it's doing, then more training is futile.

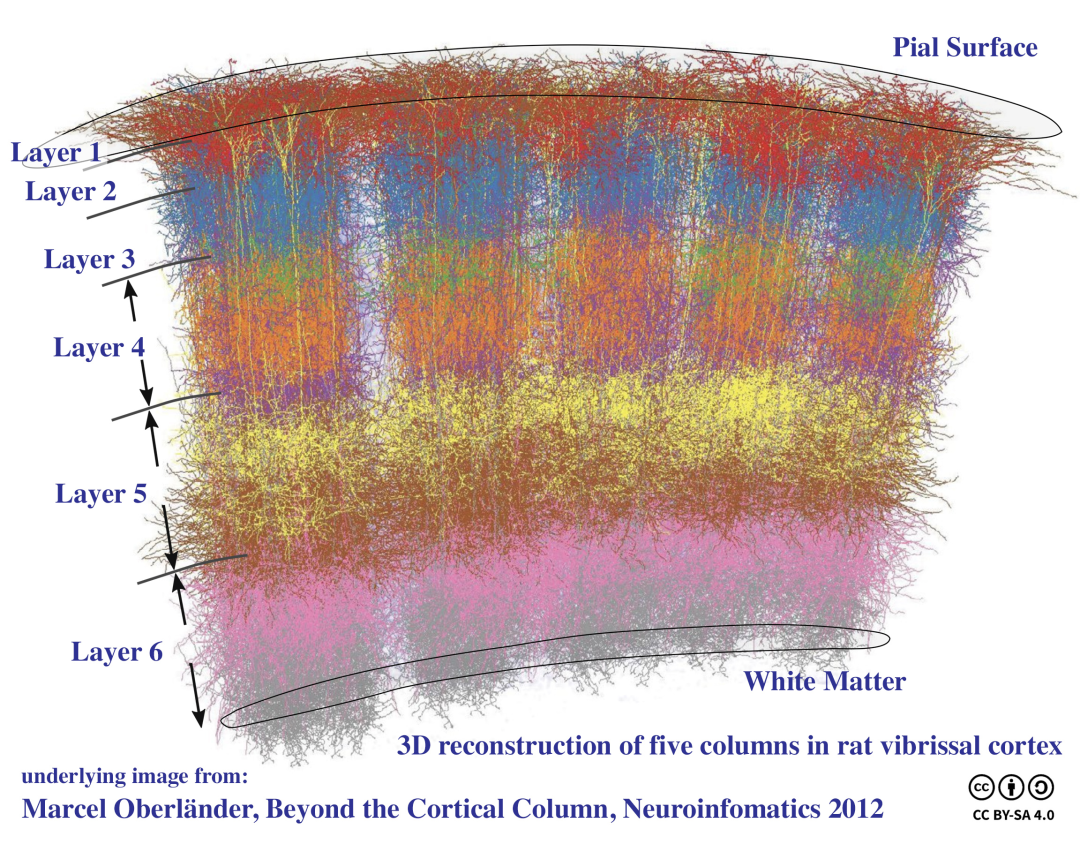

Therefore, for AI to enter the L2 stage, it must completely abandon the autoregressive Transformer architecture. In Wang's view, what Sapient aims to do is achieve AI reasoning capabilities by mimicking the human brain.

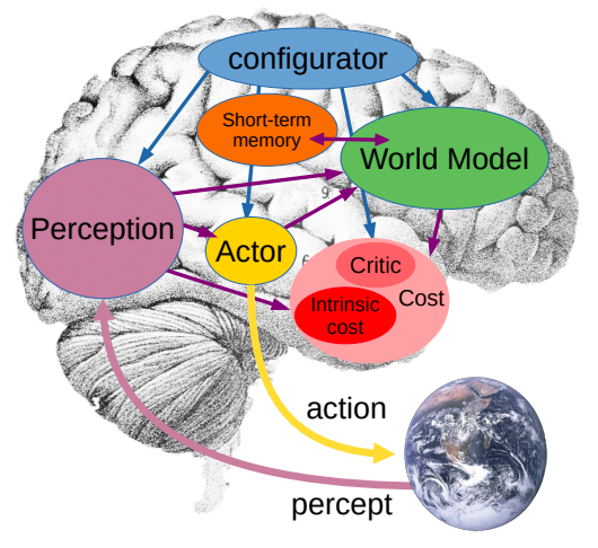

Yann LeCun's World Model theory

"At Tsinghua's Brain and Intelligence Laboratory, I do bilateral advancement based on my knowledge of neuroscience and understanding of System 2," Wang told An Yong Waves. "For the same problem, I first understand how the human brain solves it, then consider how to replicate that with AI."

He further revealed that Sapient's foundational architecture has completed mathematical verification — this will be a rare non-autoregressive model with multi-step computation, memory, and tree search capabilities. For scale-up, the team has already completed preliminary experiments combining evolutionary algorithms with reinforcement learning.

The hierarchical recurrent working logic of animal brains

Given people's expectations for AGI, perhaps only humans themselves currently meet that standard. Therefore, evolving large models toward the direction of the human brain is the direction Sapient is trying to evolve toward.

The Man Who Turned Down Musk

If you've watched Young Sheldon, Wang's story should feel familiar: both are about a genius manifesting in youth, and both are filled with obsession toward their chosen path.

Wang was born in Henan in 2000 and started learning programming at age 8. In high school, GPT-2 was released. At the time, this not only upended many deep learning theories, but also Wang's worldview: a model generating text that could pass as human — did this mean AI was about to break the Turing test? Based on this, perhaps he could create an algorithm to solve all the world's difficult problems.

Only later did he learn that such an algorithm was called "AGI."

In the world of a high schooler then, such an algorithm could eliminate war, hunger, poverty — and most urgently, the gaokao. "At the time I felt the gaokao, this mechanical thing, should be left to robots."

This also related to the hellish difficulty of the Henan gaokao. Wang decided to pursue the保送 (direct admission) route. He participated in algorithm competitions, informatics olympiads, and in the high school version of DJI's RoboMaster competition, won the championship by adding fully automated algorithms to the robots. Ultimately he gained direct admission to Tsinghua's School of Computer Science. On the first day of enrollment, the school held a mobilization meeting where teachers spoke passionately from the podium, urging everyone to do well in math, with the class's goal for the year being to achieve the highest math GPA in the grade.

"What's GPA to AGI?" Wang thought. He then transferred to Tsinghua's AIR Institute to study reinforcement learning, and later joined Tsinghua's Brain and Intelligence Laboratory to attempt fusing reinforcement learning with evolutionary computation. He interned at Pony.AI and discovered that the biggest problem in autonomous driving was that decision-making required human participation — telling the model how to decide. But if the model couldn't decide on its own, no matter how well it perceived, it couldn't lead to AGI.

Finally in his senior year, ChatGPT's emergence showed him the hope of using general capabilities to solve problems. Wang began working on an open-source model called OpenChat. This 7B-parameter model, using mixed-quality data without preference labels, required no manual data annotation or extensive hyperparameter tuning in RLHF, and could achieve ChatGPT-comparable performance on certain benchmarks when run on consumer-grade GPUs. After release, OpenChat gained 5.2k stars on GitHub and maintained over 200,000 monthly downloads on Hugging Face.

This open-source small model also intersected with Musk at a certain moment.

After Grok's release, Musk reposted a screenshot of his own model on X, showcasing its "humor" capabilities. He asked Grok "how to make cocaine," and Grok replied: "Get a chemistry degree and a DEA license... just kidding."

Wang quickly simulated this style with his own model, tagging Musk on X: "Hey Grok, I can be just as funny as you with such small parameter count."

Wang told An Yong Waves that Musk quietly skipped this post, but clicked into their homepage, browsed around, and secretly liked another post: "we need more than Transformers to go there / Transformers cannot lead us to the universe."

Later, someone from xAI sent Wang an invitation, wanting him to leverage his OpenChat experience for model development work. To most people this would seem like an incredible opportunity: xAI had money, computing power, even abundant training data, generous compensation, and was at the cutting edge of AI in Silicon Valley. But Wang thought about it and declined. He felt what he wanted to do was overturn Transformer, not follow in others' footsteps.

Wang also met his current co-founder Austin through OpenChat. Austin had previously studied philosophy in Canada, first starting a men's beauty business, then later a cloud gaming startup. When domestic AI large models were booming, he returned to China, got offers from several model companies, and helped them recruit talent along the way. That's how he discovered Wang on GitHub. They met in person as online friends and hit it off immediately.

Despite vastly different backgrounds, the two share one thing in common: when they envision a future society where AGI has been realized, it's an ideal state, one where humans have more freedom, where many of the world's current problems are solved.

Sapient's Future

Also as a Tsinghua graduate choosing to start a company building foundational models, our conversation inevitably turned to Zhilin Yang. Wang's thinking remained consistent: rather than continuing with Transformer, better to blaze a new trail. Just like his entrepreneurial idol, Llion Jones.

Llion Jones is one of the eight authors of Transformer and co-founder of Sakana.AI. What he's doing at Sakana is completely overturning Transformer's technical path, choosing to base his foundational model on "nature-inspired intelligence." The name Sakana comes from Japanese さかな, meaning "fish" — signifying "letting a school of fish gather together to form a coherent entity from simple rules." Though Sakana currently has no finished products, it completed a $30 million seed round and a $100 million Series A in just half a year. Since the AI wave began, we can see capital's enthusiasm for AI applications gradually cooling, while in AI model investment, Austin told An Yong Waves, the domestic investors he sees fall into two types: those who've invested in the "Six Little Tigers" and stop looking further, and those who are gradually beginning to explore possibilities beyond Transformer.

As "the first to eat the crab," securing startup capital isn't easy. Facing investors, before describing its technical advantages and commercial vision, Sapient first needs to clearly explain three questions: first, GPT's defects, including unstable simple reasoning, inability to solve complex problems, and hallucinations. Second, current AI application scenarios look promising, but technology can't match demand — take Devin, whose 13% accuracy rate makes it impossible to achieve its intended effect. Third, at this point in time, the market already has expectations for AI's future, computing clusters and other infrastructure are ready, and capital is only hesitating because it's stuck on downstream problems that GPT can't solve.

Even with initial startup funding, Sapient still faces the challenge of talent recruitment. The AI talent war in Silicon Valley's tech circle has reached near-frenzy levels. First there was Zuckerberg personally writing letters to DeepMind researchers, inviting them to jump to Meta; then Google co-founder Sergey Brin personally calling to discuss raises and benefits, just to retain one employee about to leave for OpenAI. Beyond full sincerity, ample computing support and high salary incentives are also essential conditions.

Data shows that OpenAI's total compensation median (including stock) has reached $925,000. Austin told An Yong Waves that Sapient's core members include multiple researchers from DeepMind, Google, Microsoft, and Anthropic. These talents from around the world have led or participated in numerous well-known models and products, including AlphaGo, Gemini, and Microsoft Copilot. The ability to organize diverse and global teams is also one of Sapient's core advantages.

But for a team challenging GPT, difficulties extend far beyond this. Sapient still faces choices in commercial markets. Sapient deploys most of its energy overseas, especially in the US and Japan. The reason for choosing the US needs no explanation, but the Japanese market also has its core advantages: while the North American AI market is active, the generative AI software competition is especially fierce. In contrast, Japan also has complete infrastructure and high-quality talent, and training data around a non-Western society's culture could become a catalyst for the next technological breakthrough.

Wang remains focused on developing his Sapient. His Moments feed is completely empty, his profile picture a deep learning framework, blurry like a textbook illustration. His cover photo is simply black with white text reading "Q-star": a rumored OpenAI project focused on developing AI's logical and mathematical reasoning.

Wang and his team are working hard toward their next milestone: releasing this entirely new model architecture, and conducting fair benchmarks on reasoning and logical capabilities, letting people see qualitative leaps in parameters.

Regardless of how far away that day may be, one thing is certain: the era of Transformer's unchallenged dominance is gradually passing.

Image source | Westworld still

Layout | Nan Yao